AI-to-Human Handoff: Best Practices for Customer Support Escalation in 2026

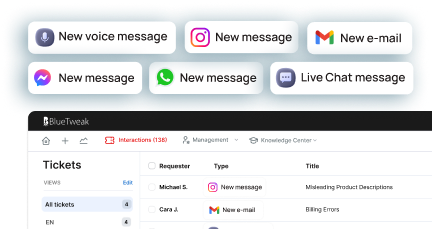

BlueTweak is an AI Customer Support Platform that unifies every conversation, customer record, and automation into one workspace.

Explore more

Insights, trends, and practical ideas from customer experience and AI

Real-world scenarios showing how teams use BlueTweak to deliver better service

The daily blueprint that guides customer support leadership.

ROI and savings calculators for support teams

See how BlueTweak stacks up against leading customer support platforms

At BlueTweak, a high-performing AI-to-human handoff enables a seamless transition from an AI agent to a human agent, preserving full conversation context and customer data so customers never have to repeat the same information. When AI systems powered by natural language processing and machine learning reach a complexity threshold or detect customer frustration, the handoff process must ensure complete context and a smooth transition to human support. This allows human agents to apply human judgment, resolve complex issues, and deliver a stronger customer experience. To build a seamless handoff, support teams need clearly defined handoff triggers, structured conversation history, and continuous AI training.

An AI-to-human handoff is the structured transfer of a customer interaction from an AI system to a human agent, ensuring full conversation context and customer data are preserved so the customer does not need to repeat information.

This is the point where automation ends, and human judgment takes over, and the quality of that transition determines whether resolution accelerates or breaks down. By the time escalation happens, the customer is already beyond self-service, often frustrated, time-conscious, and expecting resolution, not repetition.

A good handoff feels invisible: the agent picks up exactly where the AI left off, fully informed, and ready to act. A bad handoff forces the customer to start over, breaking trust instantly.

This applies across both chat and voice channels, though each brings different operational challenges.

The stakes are high. According to a PwC customer experience study, 73% of consumers say having to repeat information is one of the most frustrating parts of a support interaction, especially after being transferred. That frustration compounds quickly in AI-led journeys.

At its core, a broken AI-to-human handoff disrupts the customer experience by forcing repetition, losing conversation history, and reducing the effectiveness of both AI agents and human agents. Before solving the handoff, it’s worth understanding what failure actually costs.

Poor escalation creates a chain reaction across your support operation:

Gartner research shows that low-effort interactions cost 37% less than high-effort ones, while also reducing repeat contacts and escalations, which highlighs how inefficient handoffs directly increase cost-to-serve.

Most teams treat AI-to-human handoff as a routing problem. It’s not. It’s a context problem. If the agent doesn’t inherit the full story, the system has already failed, regardless of how accurate the routing logic is.

Radu Dumitrescu, Head of Presale & Digital Transformation, BlueTweak

This is where most teams go wrong; they optimize when to escalate, but not how the escalation actually works.

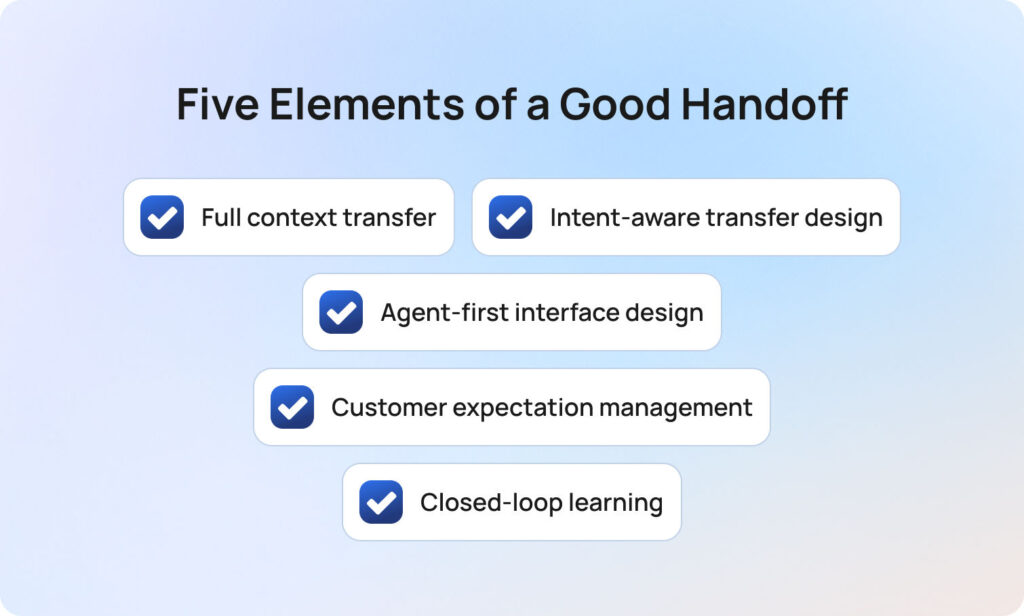

A seamless AI-to-human handoff depends on five core elements that ensure a smooth transition, maintain complete context, and enable human agents to resolve complex issues efficiently.

Once you’ve defined when escalation should happen, the real challenge is designing how that transition performs in practice. Most teams focus heavily on trigger logic, but that’s only one part of the system. A seamless handoff is the result of deliberate design across five interdependent elements. When even one is weak, the entire experience breaks down. BlueTweak applies this principle by structuring full conversation context into agent-ready summaries, rather than relying on raw conversation history.

Many platforms claim to “pass context,” but in reality, they pass raw conversation logs. That’s not context, it’s unstructured data.

A high-performing handoff delivers a structured context package that enables immediate understanding. This should include a clear summary of the issue, relevant customer and account data, identified intent, sentiment indicators, and any prior attempts at resolution.

The objective is not to provide more information, but to provide the right information in a usable format. If an agent needs to read through an entire transcript to understand the problem, the handoff has already introduced friction.

This is the foundation of truly effective AI support agents’ seamless handoff to human agents. Full conversation context ensures the human agent can act immediately with a complete understanding of the customer issue.

The common distinction between warm and cold transfers is useful, but incomplete. In practice, escalation design should reflect the nature of the interaction. Lower-complexity, low-emotion queries can typically be handled through efficient cold transfers, provided the context is well structured. As complexity increases, so does the need for richer summarisation and clearer framing of the issue.

Where emotion, urgency, or risk is involved, a more considered approach is required. In these cases, a warm transfer, where the agent is briefed before engaging the customer, helps preserve continuity and reduces the likelihood of escalation fatigue. Sensitive or regulated scenarios may require even more control, including priority routing and specialist handling.

The key point is that AI handoff to humans during complex questions should be intentionally designed, not treated as a uniform fallback. In short, intent-aware design ensures the escalation flow adapts to complexity, emotion, and risk rather than treating all escalations equally.

Even with strong AI performance, a poorly designed agent experience will undermine the handoff. At the point of connection, the agent interface must immediately communicate three things: the customer’s goal, what has already been attempted, and what is likely required next.

If this information is fragmented, hidden, or difficult to interpret, agents will revert to asking clarifying questions that the customer believes they have already answered. This is one of the most common (and avoidable) sources of frustration in escalated interactions.

Handoff quality is therefore not just a function of AI capability, but of how effectively that capability is surfaced to the human agent. The best systems are designed around agent comprehension, not data availability, and effective interface design ensures human agents can quickly interpret conversation history and deliver accurate answers without delay.

From the customer’s perspective, escalation introduces uncertainty. Without clear communication, the experience can feel like being passed between systems rather than progressing toward resolution.

The AI should actively manage this transition by acknowledging that further support is required, confirming that the next agent has full visibility of the issue, and setting expectations around what will happen next. This includes providing realistic wait times and clarifying whether additional input will be needed.

This becomes particularly important in AI bot handoff to human customer disputes, where trust and reassurance play a significant role in overall satisfaction.

A well-managed handoff does more than transfer the interaction; it maintains a sense of momentum and confidence. Managing expectations ensures a smooth transition that maintains trust and reduces uncertainty during human escalation.

In many organisations, the handoff marks the end of the AI interaction. In high-performing teams, it marks the beginning of improvement. Every escalation provides a valuable signal; was the issue suitable for automation? Did the agent receive sufficient context? Was the outcome resolved efficiently?

Capturing and analysing this data allows teams to continuously refine both AI performance and escalation logic. Over time, this reduces unnecessary handoffs, improves resolution rates, and strengthens the overall support model.

Without this feedback loop, handoffs remain static. With it, they become a mechanism for ongoing optimisation. Feedback loops allow AI systems to learn from handoffs, improving future AI responses and reducing unnecessary escalation.

Effective AI-to-human handoffs combine structured conversation context, intelligent handoff triggers, and strong human agent enablement to deliver a seamless transition across all customer interactions.

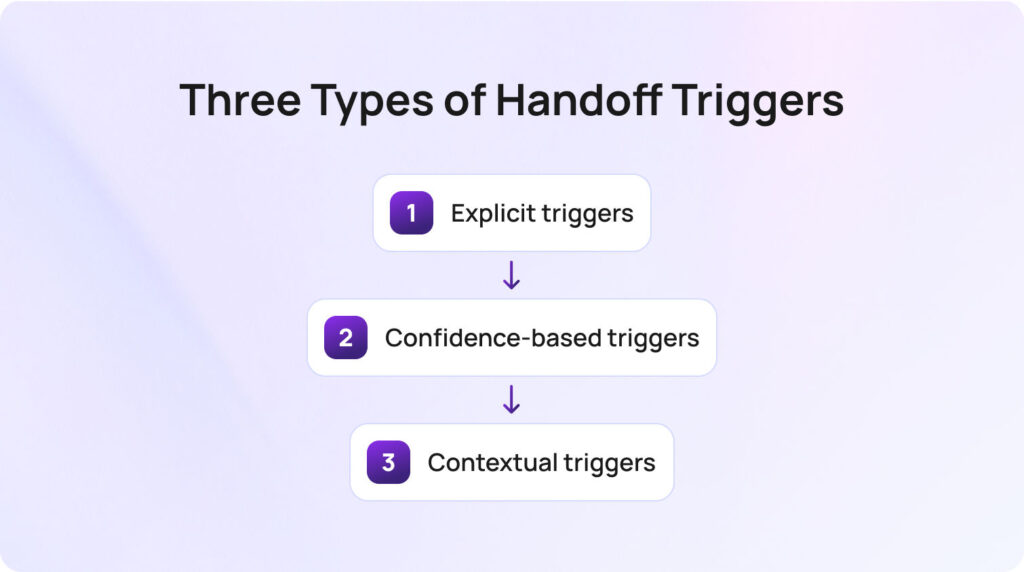

Effective AI agent handoffs rely on clearly defined handoff triggers that determine when AI should escalate to a human agent based on complexity, confidence, or customer intent. To build a reliable system, you need a clear framework for when escalation should happen. Most teams only implement one type, but the best systems use all three.

When a customer directly asks for a human, the system should escalate immediately with no loops, no friction, no retries. Ignoring this is one of the fastest ways to damage trust. In short, explicit triggers ensure customers can access human assistance immediately when they request it.

These activate when the AI drops below a defined confidence threshold or fails to resolve an issue after a set number of attempts. The challenge is calibration: too aggressive, and you overload agents; too loose, and customers get stuck. Essentially, confidence-based triggers prevent AI systems from overextending beyond their capability and reduce customer frustration.

These are the most advanced and the most valuable. They include:

This is where AI handoff to humans during complex questions becomes effective, catching edge cases that rules alone would miss. Contextual triggers allow AI to detect complex issues and escalate based on real customer interactions, not just predefined rules.

While the goal of a seamless handoff remains the same, AI-to-human handoff design differs significantly between voice and web chat due to differences in timing, conversation history, and real-time expectations. While the principles remain consistent, the execution differs significantly between chat and voice environments.

In chat-based support, handoffs are inherently more forgiving. Full transcripts are available by default, context can be structured and reviewed before engagement, and customers can wait in a queue without losing the thread of the conversation. When designed well, the transition can feel almost invisible.

Voice, by contrast, operates under real-time constraints where silence, repetition, or hesitation are immediately felt by the customer. This changes how handoffs need to be designed.

In voice environments, warm transfers become far more important. Providing the receiving agent with a live “whisper” briefing before they join the call allows them to enter the conversation with confidence, rather than asking the customer to restate the issue. Without this, even well-routed escalations can feel disjointed.

Real-time transcription also becomes a critical enabler. Unlike chat, there is no native transcript to rely on mid-interaction. Live transcription ensures the agent has immediate visibility into what has already been discussed, reducing cognitive load and improving response quality.

There is also a greater need to actively manage dead air during the transfer. In chat, a short delay is expected. In voice, even a few seconds of silence can create uncertainty. Clear messaging, hold protocols, and seamless routing all play a role in maintaining confidence during the transition.

Finally, voice handoffs place more emphasis on infrastructure reliability. Call quality, latency, and protocol stability (such as SIP performance) directly impact the experience in ways that chat simply does not.

The important distinction is that AI handoffs during complex questions cannot be implemented as a single, channel-agnostic rule set. It must be intentionally designed for the constraints and expectations of each medium.

This is precisely where modern platforms need to go further than routing logic alone, ensuring that context, timing, and agent readiness are orchestrated across both chat and voice in a consistent system. BlueTweak’s approach to handoff design reflects this shift, focusing not just on escalation decisions, but on how those escalations are experienced end-to-end by both customers and agents.

Measuring AI-to-human handoff performance requires more than tracking volume; it involves evaluating how effectively AI systems transfer conversation context, enable human support, and improve customer outcomes. If you can’t measure your AI-to-human handoff, you can’t improve it.

The challenge is that most teams track only escalation volume and treat handoff rate as a success metric in isolation. In reality, volume tells you very little about quality.

A high-performing handoff should be evaluated as a system, not a single data point. That means looking at how effectively context is transferred, how well agents resolve escalated cases, and whether the customer experience improves or deteriorates after escalation.

Handoff rate measures the percentage of AI interactions that escalate to a human agent. On its own, this number is easy to misinterpret. A low rate might indicate strong automation, or it might signal that customers are being trapped in AI loops. A high rate could reflect poor AI performance, or simply a use case that genuinely requires human intervention.

The metric only becomes meaningful when paired with outcomes. The goal is not to minimise handoffs, but to ensure they occur at the right moments, for the right reasons. Handoff rate should reflect meaningful escalation, not AI failure or poor experience design

This metric answers a more fundamental question: Does the agent have everything they need at the moment of connection? In practice, this is best measured through agent feedback or QA review, rather than system logs. Agents should be able to quickly assess whether the context provided was sufficient, partially useful, or missing critical information.

A consistently low score here is a clear signal that the handoff is failing before the agent even engages. It is also one of the strongest predictors of poor downstream performance, particularly in complex or sensitive cases. Complete context is essential for enabling human agents to resolve customer issues efficiently.

Post-handoff FCR measures whether the agent resolves the issue within the same interaction after escalation. This is where handoff quality becomes tangible. If escalated cases regularly require follow-up, it suggests one of two issues: either the wrong cases are being escalated, or the agent is not receiving the context needed to act effectively.

Tracking this metric specifically for escalated interactions, rather than blending it into overall FCR, provides a much clearer view of how well your handoff process is performing. Post-handoff resolution shows whether the transition layer actually improves outcomes.

Repeat contact rate captures whether customers return within a defined window, typically 24 to 48 hours, after an escalated interaction. This is one of the most reliable indicators of hidden failure. Even if an issue appears resolved in the moment, a follow-up contact suggests that something was missed, either in the handoff, the resolution, or the clarity of communication.

When viewed alongside the handoff rate, this metric helps distinguish between necessary escalation and ineffective escalation. In short, repeat contact reveals hidden gaps in the handoff process and overall customer support experience.

CSAT delta compares customer satisfaction between fully automated interactions and those that required escalation. This is where many teams uncover uncomfortable truths. If escalated interactions consistently score lower than automated ones, the issue is rarely the agent; it is almost always the handoff experience.

A well-designed AI-to-human handoff should increase customer confidence, not diminish it. When the opposite happens, it signals a breakdown in continuity, expectation setting, or context transfer.

Taken together, these metrics shift handoff from a binary event into a measurable system. The focus should be on more than just moving conversations from AI-to-human, to ensuring that every escalation improves the likelihood of fast, accurate, and satisfying resolution. CSAT differences highlight whether human involvement enhances or weakens the customer experience.

BlueTweak approaches AI-to-human handoff as a complete system, ensuring AI agents and human agents work together with full conversation context, structured customer data, and continuous optimisation.

By this point, the standard for a high-performing AI-to-human handoff is clear. The real question is not whether escalation exists within your support stack, but whether it consistently delivers under real-world conditions across channels, edge cases, and moments of heightened customer emotion.

This is where many platforms fall short. They treat handoff as a routing event, rather than a coordinated system spanning AI decisioning, context management, agent experience, and continuous optimisation.

Most support teams don’t have a handoff problem. What they’re actually struggling with is continuity, and BlueTweak addresses this directly. Rather than treating AI-to-human handoff as a feature, it is designed as an end-to-end system that ensures the interaction does not restart, degrade, or lose momentum at the point of escalation.

In practice, that means:

This system-level approach is what allows handoffs to perform reliably, not just in controlled scenarios, but in the unpredictable reality of live customer interactions.

This is where BlueTweak’s approach stands apart, treating handoff as the connective tissue between automation and human expertise.

A clear example of this can be seen in BlueTweak’s work with global BPO provider Conectys, where consolidating context and streamlining agent workflows led to faster resolution times and measurable improvements in customer satisfaction.

The focus should be on ensuring that escalation improves the outcome.

A high-performing AI-to-human handoff combines artificial intelligence and human judgment to deliver a seamless transition that improves both customer experience and business outcomes.

The AI-to-human handoff is where artificial intelligence and human judgment intersect to resolve complex issues. The most effective support teams understand that success is not defined by how often handoffs occur, but by how well they perform when they do.

A seamless transition from AI-to-human support depends on maintaining full conversation context, preserving customer data, and enabling the human agent to act with a deep understanding of the situation from the very first response.

This requires a deliberate balance. AI systems must handle scale, speed, and accurate answers through natural language processing and machine learning, while human involvement provides the nuance, emotional intelligence, and decision-making needed for complex cases.

When this balance is achieved, the impact is significant:

For teams asking ‘how do I build seamless AI-to-human handoff’, the answer is not a single feature or tool. It is a system; one that combines intelligent handoff triggers, complete context transfer, and continuous optimisation through feedback and AI training. This system should ensure that every handoff improves the outcome.

In customer support in 2026, the true competitive advantage is not in automation alone, but in how effectively you combine AI and human assistance into a single, seamless experience. To see how BlueTweak brings this AI-to-human handoff framework to life in real-world customer support environments, you can explore the platform or book a live demo.

An AI-to-human handoff is the process in which an AI agent or AI chatbot transfers a customer interaction to a human agent when further assistance is required. This typically happens when AI systems cannot resolve complex issues or when human intervention is needed. A successful handoff preserves full conversation history, customer data, and conversation context to ensure continuity between AI and human agents.

To build a seamless handoff, support teams must combine clear handoff triggers, complete context transfer, and strong agent experience design. This includes maintaining full conversation context, passing prior context from AI responses, and ensuring human agents can act immediately without asking for the same information again.

AI should initiate human escalation when it detects complex cases, emotional cues, or low confidence in its responses. Using sentiment analysis and intent recognition, AI systems can identify when customer frustration is increasing and trigger a smooth transition to human support at the right moment.

Full conversation context ensures that the human agent has a deep understanding of the customer’s issue from the start. Without it, customers are forced to repeat the same information, which negatively impacts customer experience and increases resolution time.

AI systems enhance customer experience by handling high-volume customer interactions, delivering accurate answers, and routing complex issues efficiently. When combined with human assistance and human judgment, they create a balance between speed and quality that improves customer loyalty and satisfaction.

Common challenges include losing conversation history, poor handoff triggers, and a lack of complete context for the receiving agent. These issues lead to customer frustration, inefficient escalation flow, and lower business outcomes.

AI agent handoffs improve through continuous AI training and feedback loops. By analysing customer interactions, refining AI responses, and identifying where human help was required, AI platforms can learn to handle more complex issues while improving the quality of human escalation.

As Head of Digital Transformation, Radu looks over multiple departments across the company, providing visibility over what happens in product, and what are the needs of customers. With more than 8 years in the Technology era, and part of BlueTweak since the beginning, Radu shifted from a developer (addressing end-customer needs) to a more business oriented role, to have an influence and touch base with people who use the actual technology.