Human-in-the-Loop AI: How to Stay in Control While You Scale Support in 2026

BlueTweak is an AI Customer Support Platform that unifies every conversation, customer record, and automation into one workspace.

Explore more

Insights, trends, and practical ideas from customer experience and AI

Real-world scenarios showing how teams use BlueTweak to deliver better service

The daily blueprint that guides customer support leadership.

ROI and savings calculators for support teams

See how BlueTweak stacks up against leading customer support platforms

Human-in-the-loop AI is an AI operating model where machine intelligence and human judgment continuously work together to review, correct, and improve outputs before they impact customers. Rather than replacing humans, it ensures AI systems stay accurate, accountable, and aligned with real-world context. Human-in-the-loop AI is not a temporary safeguard while models mature. It is the operating model that allows AI to scale customer support without losing accuracy, trust, or CX quality. Organizations scaling AI in customer-facing environments are increasingly prioritizing structured human oversight to maintain quality and compliance, rather than reducing it as automation grows.

Human-in-the-loop AI is an approach to artificial intelligence where human judgment is embedded into the decision-making process to guide, validate, or correct AI outputs in real-time, forming the foundation of modern customer service AI empowerment.

In practical terms, the human-in-the-loop AI definition is simple: AI generates outputs, and humans provide oversight, feedback, or approval to ensure accuracy, reduce risk, and improve performance over time.

A 2025 Deloitte report found that AI adoption in customer service has increased from 46% in 2023 to 61% in 2025, underscoring how quickly AI is moving from experimentation to operational reality in support environments. This shift is driving renewed focus on structured human-in-the-loop AI frameworks, particularly in high-volume support environments where accuracy and customer trust directly impact retention.

There are three primary human-in-the-loop models used across AI systems:

In customer support operations, the right model depends on interaction type, confidence thresholds, and the potential impact of getting the decision wrong.

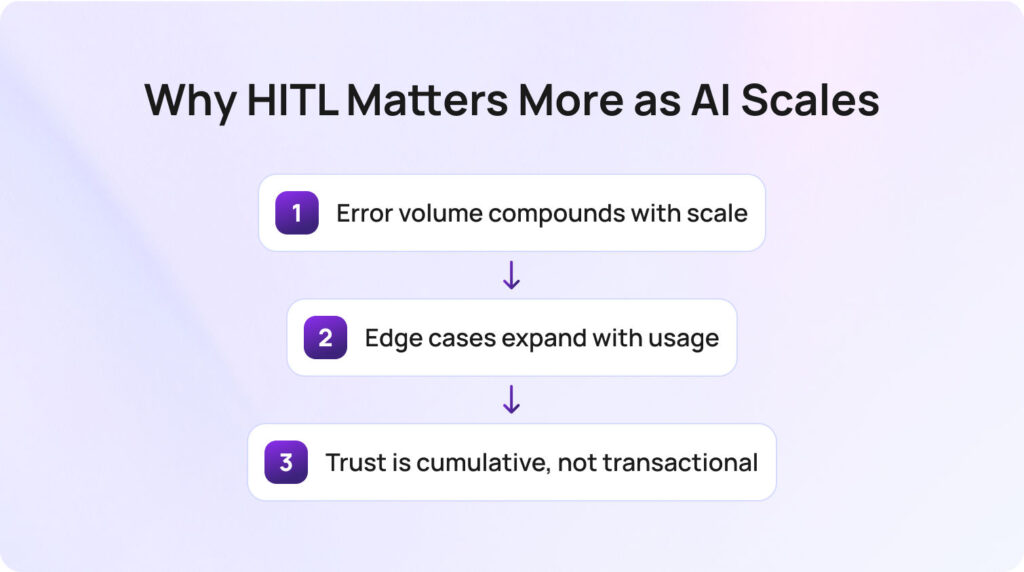

Most ML-focused explanations of AI human-in-the-loop assume that human oversight is a bottleneck that should be reduced over time. In customer support operations, the opposite is true: oversight becomes more important as scale increases. This is because scaling AI does not just increase efficiency; it multiplies risk exposure.

The biggest misconception in AI-driven support is that scale removes the need for human control. In reality, scale amplifies every error, every edge case, and every blind spot in the model.

Radu Dumitrescu, Head of Presale & Digital Transformation at BlueTweak

There are three key reasons this matters:

Error volume compounds with scale: even a small error rate becomes operationally significant at scale. A system handling 50,000 monthly conversations will surface far more misclassifications than one handling 500. Without structured human review, these errors remain invisible until they affect CSAT and retention.

Edge cases expand with usage: as AI systems are exposed to broader real-world inputs, they encounter long-tail queries that were underrepresented in training data. These edge cases are where generative AI and ML models are most likely to produce misleading outputs or fail entirely.

Trust is cumulative, not transactional: in customer support, one poor interaction can permanently shift behavior. Customers who experience incorrect AI responses are more likely to bypass automation entirely and request human agents in future interactions, reducing the ROI of automation.

Nearly nine in ten executives say they have already partially or fully implemented AI in customer-facing functions such as customer service and marketing, according to PwC’s 2025 Customer Experience Survey. This shift is rapidly moving AI from experimentation into live support environments, where human-in-the-loop AI becomes critical to maintaining accuracy, trust, and customer experience quality at scale. This is why many teams are rethinking not just how AI improves customer support, but how AI and human-in-the-loop systems work together to sustain quality at scale.

The challenge most teams face is not understanding what human-in-the-loop AI is, but knowing how to apply it consistently across channels in modern, omnichannel customer support environments. The key is to align HITL models with interaction risk, not just automation capability.

Routine, high-confidence queries such as password resets or order tracking are best suited to human-on-the-loop setups. The AI agent human-in-the-loop approach allows the system to act independently while humans monitor performance, particularly through suggested response workflows and approval layers.

More sensitive interactions require tighter control. Complaints, billing disputes, or refund requests should use a human-in-the-loop model where AI proposes responses and human agents approve them before sending.

High-emotion or high-value interactions, such as enterprise escalations or retention-critical conversations, should remain human-as-the-loop, where AI supports but does not lead decision-making. This is where AI agents and human-in-the-loop setups become critical, combining chatbot efficiency with human agents to handle complexity and nuance.

To operationalise this at scale, support teams need a clear framework that maps interaction risk to the right level of human oversight:

| HITL Model | When to Apply | AI Role | Human Role | Oversight Level |

| Human-on-the-loop | Routine, high-confidence queries | Acts autonomously | Monitors metrics and exceptions | Low |

| Human-in-the-loop | Sensitive or evolving workflows | Drafts response | Reviews and approves | Medium |

| Human-as-the-loop | High-value or emotional cases | Assists with insights | Leads interaction | High |

The most effective AI human-in-the-loop approach also depends on confidence scoring. If an AI model cannot meet a defined confidence threshold, it should automatically escalate to human review. These thresholds are not static; they must be reviewed regularly using live performance data, including CSAT delta and repeat contact rates.

Equally important is building the feedback loop, supported by quality assurance and analytics, to continuously evaluate model performance and human intervention outcomes. Every correction made by a human becomes training data for future model training. This is where active learning and machine teaching reinforce one another in real production environments.

Finally, scalable HITL systems rely on automated catch layers. Rather than reviewing every interaction, teams should use sentiment analysis, anomaly detection, and intent classification to surface only the conversations most likely to require human intervention.

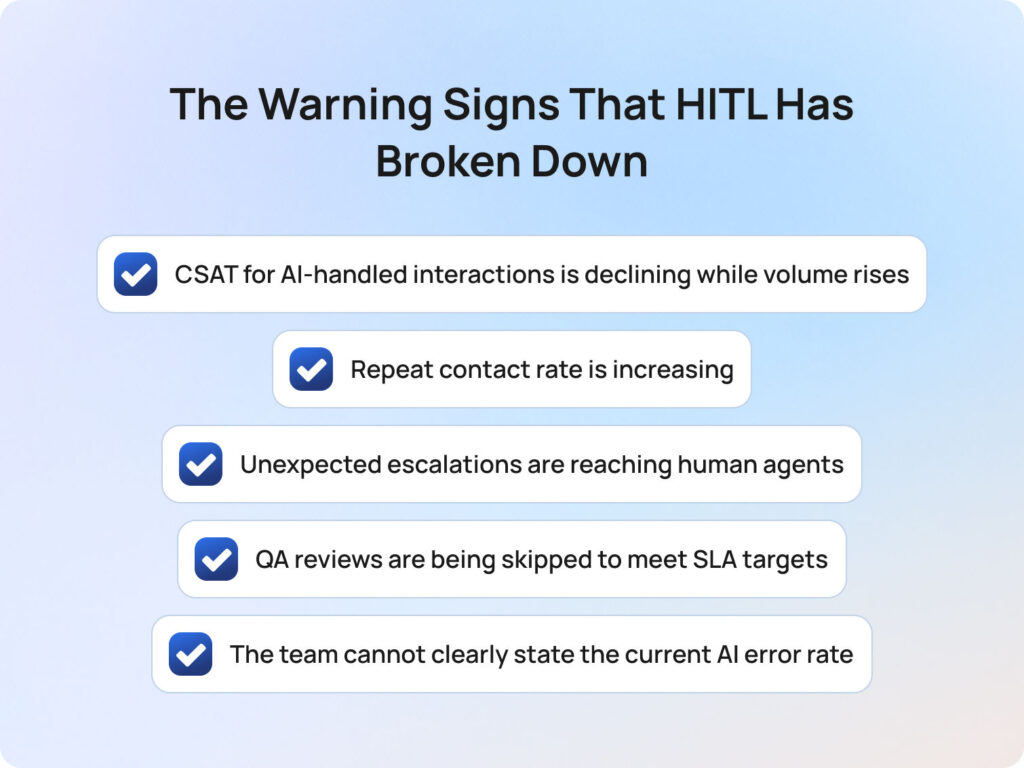

When human-in-the-loop systems fall out of sync with AI scale, performance rarely fails all at once. Instead, it degrades quietly, showing up first in operational metrics before it becomes visible to customers.

The most effective teams treat the following signals as early warnings, not lagging indicators:

Any one of these signals warrants immediate review of your confidence thresholds, escalation rules, and feedback loop design. Left unaddressed, they compound quickly as volume increases. Many of these signals emerge during the early stages of implementing AI in customer support, particularly when oversight models are not designed alongside the technology itself.

BlueTweak is designed around the principle that AI and human-in-the-loop systems must work as a continuous feedback cycle powered by conversational AI for customer service environments, not a static deployment.

In practice, BlueTweak’s suggested reply functionality enables a native human-in-the-loop workflow: the AI generates a response, and the human agent validates or edits it before sending. This ensures accuracy while preserving speed in live customer support environments.

For human-on-the-loop operations, BlueTweak’s analytics layer aggregates interaction performance data, allowing teams to monitor AI models without reviewing every individual case. This includes CSAT trends, containment rates, and repeat contact signals.

The platform’s QA module extends this further by scoring both human and AI outputs against the same framework. This creates a consistent benchmark for evaluating model behavior, improving training data quality, and refining confidence thresholds over time.

BlueTweak’s customer intelligence capabilities also reduce the overhead of oversight by summarising tickets, highlighting intent patterns, and surfacing anomalies that require human review.

Human-in-the-loop AI only works when feedback is operationalised. If human input is not structured back into the system, you don’t get learning, you get stagnation.

Radu Dumitrescu, Head of Presale & Digital Transformation at BlueTweak

For a real-world example, BlueTweak’s AI-powered customer support transformation for an e-commerce client shows how embedding human review into automated workflows improved efficiency, reduced interaction times, and maintained service quality at scale. By combining automated handling for routine queries with human oversight on more complex or sensitive interactions, the team was able to scale support volume without increasing operational risk. The result was not just faster responses, but more consistent resolution quality across both AI- and human-led interactions.

Human-in-the-loop AI is not a constraint on automation. It’s the system that makes automation viable in real customer environments, where accuracy, trust, and context matter as much as speed.

The teams seeing the strongest results are the ones designing the loop intentionally: aligning oversight with interaction risk, setting confidence thresholds based on real performance data, and turning every piece of human feedback into measurable model improvement.

As AI adoption accelerates across customer support, the gap is no longer between companies using AI and those that are not. It is between those treating AI as a standalone tool and those operating it as a controlled, continuously improving system.

That distinction shows up quickly in performance. Without structured human oversight, AI scale introduces inconsistency, hidden errors, and declining trust. With it, AI becomes a force multiplier, increasing efficiency without compromising quality.

For support leaders, the question today is whether their current setup is designed to sustain quality as volume grows.

If you want to see how this works in practice, BlueTweak gives you a way to apply human-in-the-loop AI from day one, with built-in approval workflows, performance analytics, and feedback loops that continuously improve your AI outputs.

You can start with a demo of the BlueTweak platform, or try it yourself with a free trial, and see how a structured human-in-the-loop approach changes both the speed and quality of your support operations.

Human-in-the-loop AI refers to an approach where machine learning systems and humans work together throughout the model training and deployment process. Instead of fully autonomous outputs, humans provide human input, human feedback, and human intervention to guide ML models and ensure accurate learning. This often involves data labeling, human review, and active learning loops where data scientists and domain experts refine model behavior over time.

Human-in-the-loop improves accuracy by combining machine learning with human intelligence during the learning process. When ML models produce uncertain outputs, humans provide feedback, correct errors, and supply labeled data. This human involvement helps mitigate bias, improve bias mitigation strategies, and ensures models adapt to real-world customer interaction patterns. In customer support, this reduces misleading outputs and improves decision-making across high-risk interactions.

Human-in-the-loop in AI is used to ensure that automated systems remain accurate, safe, and aligned with real-world context. It is commonly applied in supervised learning, reinforcement learning, and machine learning environments where humans play a role in refining model training. In customer support, loop HITL approaches help balance automation with human interaction, especially when dealing with complex or emotionally sensitive cases.

Human-in-the-loop AI examples include customer support platforms where agents review AI-generated responses before sending, autonomous vehicles requiring human intervention in edge cases, and ML pipelines that rely on active learning and human feedback to improve performance. These systems combine human capabilities with machine intelligence, ensuring that large datasets are continuously refined by providing labels and direct feedback from human experts or subject matter experts.

Machine learning systems use human feedback to improve model performance through iterative training processes. This can involve supervised learning with labeled data, interactive machine learning, or machine teaching approaches where humans provide feedback during training. By incorporating human input into the ML pipeline, models can learn more effectively, reduce errors, and improve performance across unsupervised learning and reinforcement learning environments.

As Head of Digital Transformation, Radu looks over multiple departments across the company, providing visibility over what happens in product, and what are the needs of customers. With more than 8 years in the Technology era, and part of BlueTweak since the beginning, Radu shifted from a developer (addressing end-customer needs) to a more business oriented role, to have an influence and touch base with people who use the actual technology.