Human-in-the-Loop Customer Support: Scale AI Without Losing Oversight

BlueTweak is an AI Customer Support Platform that unifies every conversation, customer record, and automation into one workspace.

Explore more

Insights, trends, and practical ideas from customer experience and AI

Real-world scenarios showing how teams use BlueTweak to deliver better service

The daily blueprint that guides customer support leadership.

ROI and savings calculators for support teams

See how BlueTweak stacks up against leading customer support platforms

Human-in-the-loop customer support is the scalable model for combining AI efficiency with human judgment in modern service operations. It enables AI agents to handle repetitive tasks at speed, while human oversight is applied where empathy, context, and complex decision-making are required. In 2026, the challenge is no longer whether to use AI in the contact center, but how to structure the right balance of automation, human expertise, and control without creating bottlenecks.

Human-in-the-loop (HITL) customer support is a model where AI systems handle customer interactions with structured human oversight applied at key decision points to ensure accuracy, quality, and trust.

Unlike fully automated systems, HITL introduces human involvement where it matters most; not everywhere, but not nowhere either. AI handles routine tasks at speed, while human agents step in for review, judgment, and complex issues.

This distinction matters more as AI scales. As generative AI and machine learning models take on a larger share of customer interactions, the risk is now loss of control. Without the right loop, AI systems (often powered by modern AI customer support software) can produce generic responses, mishandle edge cases, or erode customer trust at scale.

This is exactly the challenge platforms like BlueTweak are designed to solve; not just enabling AI in the contact center, but structuring how human oversight operates alongside it as volume grows.

A 2025 Gartner survey found that 95% of customer service leaders plan to retain human agents to define how AI is used, even as automation scales. At the same time, AI is expected to resolve up to 80% of routine interactions in the coming years. The implication is clear: the challenge is no longer whether AI can scale, but how to maintain human oversight as it does.

To scale effectively, teams need a structured way to think about human oversight. Not all interactions require the same level of human interaction, and applying a single model across all AI workflows is where most systems fail. The practical solution is a three-level framework:

| HITL Level | Example Interaction Types | AI Confidence Threshold | Human Role | Best For |

| Human-on-the-loop | FAQs, order status, password resets | High (>90%) | Monitor and audit | Proven, high-volume routine queries |

| Human-in-the-loop | Refund requests, billing queries, complaints | Medium (60–90%) | Review and approve before sending | Sensitive topics; new AI deployments |

| Human-as-the-loop | Crisis, VIP, complex multi-issue | Low (<60%) | Lead interaction with AI assist | High-stakes or emotionally complex cases |

This model aligns oversight with risk and AI confidence, rather than volume alone.

PwC’s 2025 Customer Experience Survey found that only 30% of consumers say AI has improved customer service, while a significantly larger share reports neutral or negative outcomes, highlighting the continued importance of human oversight in AI-driven support models.

At a small scale, human oversight feels manageable, but at an enterprise scale, it becomes a problem. When AI handles hundreds of interactions, human review queues work; when it handles tens of thousands, the same model breaks.

Manual review doesn’t scale linearly; this is one of the core implementing AI customer support challenges that teams face once deployment moves beyond the pilot stage. When AI handles tens of thousands of interactions, traditional QA models break down, and teams are forced to rethink operational structure rather than just tooling. A queue that works at 100 interactions per day becomes a bottleneck at 10,000. Teams either slow down response times or quietly start skipping reviews.

AI errors also compound with volume. A 2% error rate seems acceptable until it becomes 200 mistakes per day. Without a structured catch mechanism, those errors slip through unnoticed.

Then comes oversight fatigue. When human agents are reviewing large volumes of routine AI interactions, cognitive load increases, attention drops, and the interactions that most need human judgment (think: edge cases, emotionally charged situations, etc.) are the ones most likely to be missed.

AI errors also compound with volume. A small failure rate may seem manageable until it scales across thousands of interactions. A 2025 Qualtrics report found that nearly one in five consumers who used AI for customer service saw no benefit, with failure rates almost four times higher than other AI use cases. As volume increases, the challenge is in detecting where AI has gone wrong in the first place.

The moment most organizations realize something is wrong isn’t when AI fails… It’s when they can’t explain why it failed at scale. Oversight doesn’t break because of the technology. It breaks because the process hasn’t evolved to match it.

Radu Dumitrescu, Head of Presale & Digital Transformation, BlueTweak

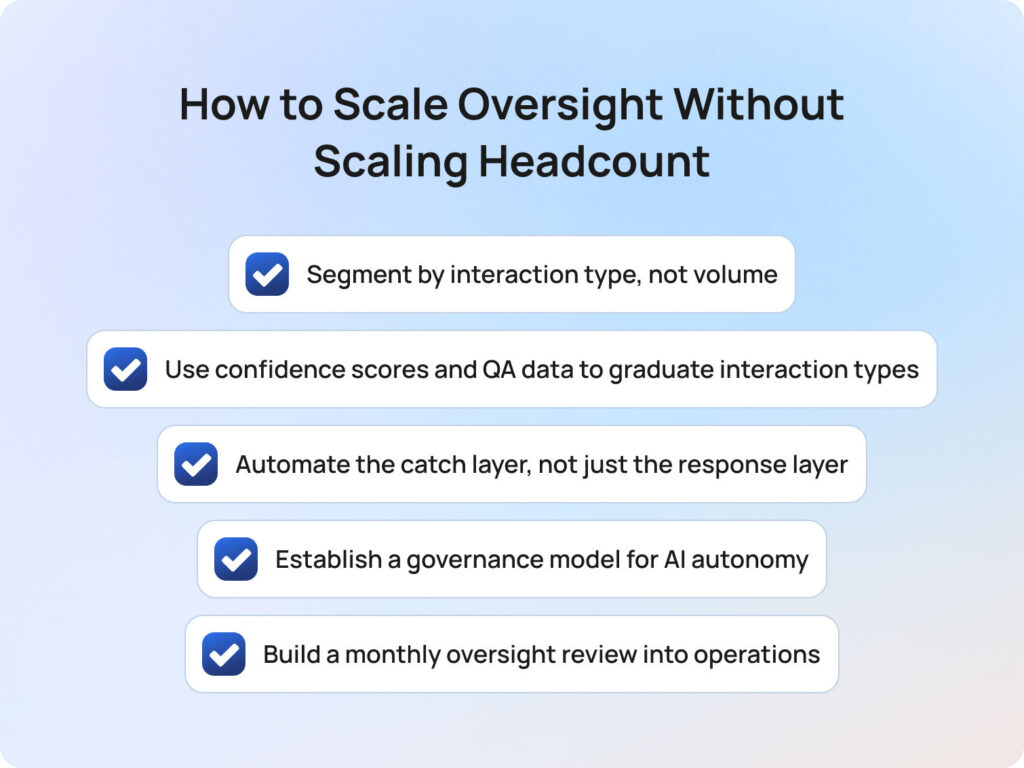

Fixing the oversight problem means redesigning how oversight works. At a small scale, human-in-the-loop customer support often looks like manual review layered on top of AI. But as interaction volume grows, that model quickly becomes unsustainable.

The teams that scale successfully treat oversight as a dynamic system; one that evolves alongside their AI workflows. That shift, from manual process to structured model, is what allows AI and human agents to operate efficiently at scale.

The most common mistake is applying the same level of human oversight across every AI interaction. It feels safer, but in practice, it creates unnecessary friction and limits scale.

A more effective approach is to segment interactions based on complexity, risk, and AI confidence. In most contact centers, a significant proportion of volume sits in routine, repeatable queries, following predictable patterns and requiring limited human judgment.

Gartner predicts that AI will autonomously resolve up to 80% of common customer service issues in the coming years, highlighting just how much of today’s support demand is made up of low-complexity, high-volume interactions.

But in practice, most organizations are earlier in that journey.

In most environments we work with, the most routine queries, the ones that can be confidently automated today, typically make up around 20–30% of total volume. The opportunity is not just to automate more, but to expand that category safely over time.

Radu Dumitrescu, Head of Presale & Digital Transformation, BlueTweak

Typically, this high-volume segment includes:

These interactions are well understood and low risk, making them ideal for human-on-the-loop oversight, where AI handles the interaction, and humans monitor performance at a system level.

By concentrating human involvement on the remaining, higher-risk categories, teams reduce cognitive load, improve speed, and maintain control, all without increasing headcount.

Segmentation is only the starting point; the real value comes from movement between tiers. AI systems are not static; they improve with training, feedback, and exposure to real-world interactions. But many organizations fail to capitalize on this because they don’t define how interactions “graduate” to lower levels of oversight.

Instead, interaction types remain stuck in human-in-the-loop indefinitely, creating a ceiling on efficiency. To avoid this, teams need clear, measurable criteria for progression. At scale, improving how to improve average handle time becomes less about agent speed and more about eliminating unnecessary human review through intelligent automation.

To ensure consistency, organisations often rely on structured customer support metrics to define thresholds for AI performance and human oversight transitions. In practice, that usually means aligning around a small set of performance signals:

More importantly, ownership of this decision must be explicit. Without a structured graduation model, AI never earns trust — and oversight never scales down.

Most organizations focus their AI efforts on generating responses faster, but most don’t invest in detecting when those responses shouldn’t be trusted. At scale, the idea that human agents can manually review every interaction becomes unrealistic. Instead, oversight needs to shift from blanket review to intelligent flagging, supported by broader operational systems such as workforce management, which help ensure the right human capacity is applied at the right moments.

This is where AI can support human oversight directly, by surfacing the interactions that actually require attention. For example, effective systems will automatically flag:

The result is a system where human agents are no longer acting as passive reviewers, but as targeted decision-makers, focusing their expertise where it has the greatest impact.

As AI systems take on more responsibility, the question of control becomes more important, not less. Who decides when an AI agent can operate more independently? What data justifies that decision? And who is accountable if something goes wrong?

In many organizations, these decisions happen informally, often driven by operational pressure rather than performance data. Over time, this creates inconsistency, risk, and a lack of visibility into how AI is actually being used.

A formal governance model introduces structure by defining:

This turns scaling from a reactive decision into a controlled progression, where human oversight evolves in step with AI capability.

Oversight is not something you configure once and leave in place. It needs to evolve continuously as AI systems learn and customer expectations shift. That’s why leading teams build oversight into their operating rhythm; on a monthly basis, they review performance across key dimensions (QA scores, customer satisfaction, error rates, and escalation patterns) broken down by interaction type.

Each interaction category should result in a clear decision:

Over time, this creates a feedback loop where human judgment actively shapes how AI systems perform. This is what transforms human-in-the-loop from a safety net into a competitive advantage.

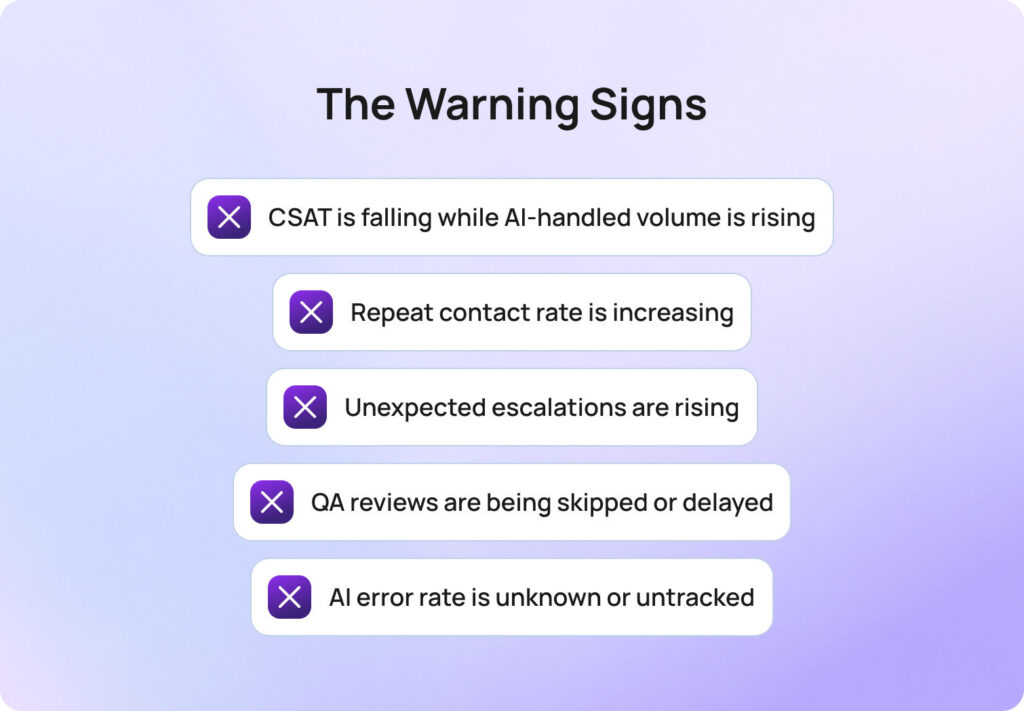

Most teams don’t realize their oversight model is failing until customer experience has already started to degrade. But breakdown doesn’t happen all at once; it shows up gradually, in metrics that seem unrelated at first, or in small operational workarounds that quietly become the norm.

The key is knowing what to look for early, before these signals compound into systemic issues. In most cases, the problem isn’t that AI is underperforming; it’s that oversight hasn’t evolved to match the scale of deployment.

Here are the clearest indicators that your human-in-the-loop customer support model is falling behind:

Individually, these signals may seem manageable. Together, they point to a deeper issue: a system designed for low-volume oversight being stretched beyond its limits.

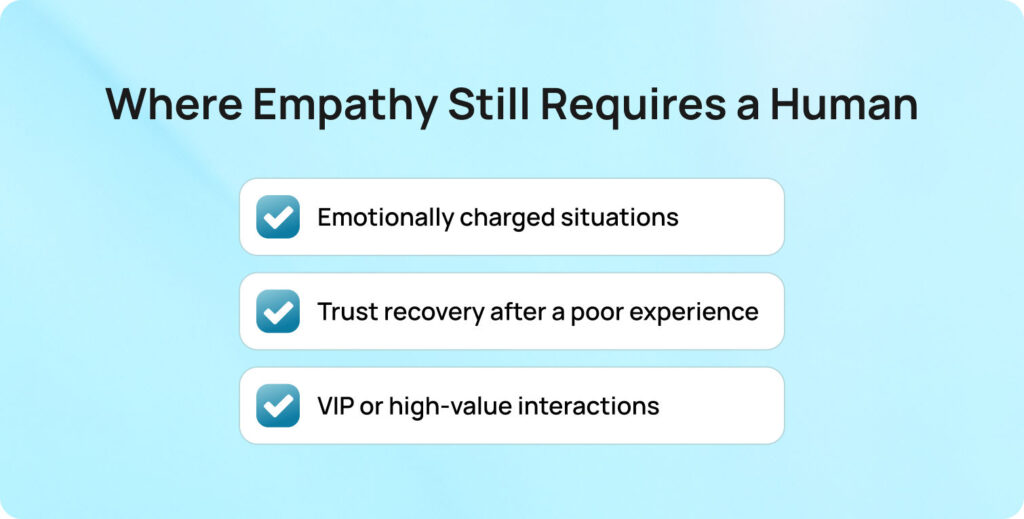

Even in highly automated environments shaped by modern AI chatbot customer service human agents’ dynamics, there are moments where human involvement is non-negotiable. These are not defined by complexity alone, but by context. Specifically, situations where emotional intelligence, nuance, and accountability directly influence the outcome of the interaction.

The risk in over-automation is not just getting the answer or the moment wrong. There are three categories where human agents should remain central, regardless of AI confidence scores:

This is not just a theoretical distinction. PwC’s Customer Experience research shows that 86% of consumers say human interaction remains important to their overall brand experience, reinforcing that AI and human agents serve fundamentally different roles in the customer experience.

The implication is clear: AI should handle volume, but humans should handle moments that matter.

This is where human-in-the-loop customer support becomes a way to protect customer trust at scale.

BlueTweak’s approach to human-in-the-loop customer support extends across modern omnichannel customer support and is built around the idea that AI and human agents should operate as a coordinated system, not separate layers.

At the human-on-the-loop level, BlueTweak’s AI chatbot and voicebot handle high-volume, routine tasks autonomously across channels. This is where scale is achieved.

At the human-in-the-loop level, suggested replies act as a built-in co-pilot. AI drafts responses, but a live agent reviews and approves before sending. This preserves speed while maintaining human judgment.

To address the scaling problem directly, BlueTweak focuses on the catch layer:

This reduces oversight fatigue and makes real-time collaboration between AI and human agents viable at scale.

The quality loop is where the system compounds value. BlueTweak’s quality assurance module evaluates AI-handled interactions on the same criteria as human ones: accuracy, tone, and resolution. This creates the data needed to safely expand AI autonomy over time.

Finally, customer service analytics surface the exact signals that matter: CSAT trends, repeat contact rates, and performance by interaction type. This turns oversight from a manual process into a measurable system.

A strong example of this in practice can be seen in BlueTweak’s AI-powered customer support transformation for an e-commerce client, where high volumes of repetitive queries were automated alongside structured human oversight, resulting in faster resolutions and improved operational efficiency.

The shift toward human-in-the-loop customer support today is centred on designing a collaborative model where both artificial intelligence and people play to their strengths.

AI agents are increasingly effective at handling repetitive tasks, from basic order updates to standard account queries. This frees the contact center to focus human effort where it matters most: moments requiring human empathy, human expertise, and nuanced understanding of customer context.

But scale changes the challenge. As AI agents take on more volume, the quality of oversight becomes the differentiator. Without structured systems for feedback, segmentation, and governance, even the best AI systems can degrade customer experience rather than improve it.

The organizations succeeding today are not those replacing humans, but those redefining when humans step in. They use customer data and relevant data to continuously refine when automation is safe, and when human judgment is required to handle complex or emotionally sensitive interactions.

Ultimately, the future of customer support is not fully automated; it is intelligently balanced. A system where AI delivers scale, and humans preserve trust, nuance, and emotional intelligence in every conversation.

As organizations evolve, success will depend on how well they design systems where technology and human capability work together, not in isolation.

Ready to scale human-in-the-loop customer support without losing control? Speak with one of our customer support experts to explore how it works in practice.

Human-in-the-loop customer support is a model where artificial intelligence handles routine customer interactions, while humans provide oversight, judgment, and emotional context where needed. It ensures that AI agents can scale efficiency without removing human empathy from the customer experience.

While AI is effective at managing repetitive tasks, it struggles in situations requiring human empathy, contextual understanding, or nuanced decision-making. In many contact centers, humans still step in to manage exceptions, ensure quality, and maintain trust during complex conversations or follow-ups.

A well-designed HITL system improves customer experience by combining AI speed with human empathy and expertise. AI agents process large volumes of interactions, while humans ensure accuracy, fairness, and emotional intelligence in more sensitive conversations. This leads to better outcomes across the entire customer journey.

Customer data and relevant data are essential for training AI systems, improving accuracy, and deciding when human intervention is needed. Teams often rely on structured feedback loops and provide feedback from human agents to continuously improve both automation and oversight quality.

The future of customer support is a collaborative model where AI and humans continuously learn from each other. AI will handle increasing volumes of routine interactions, while humans focus on complex, high-empathy cases. As technology evolves, solutions like BlueTweak are enabling this balance at scale, and the most successful companies will be those that combine automation with strong human expertise, not replace it.

As Head of Digital Transformation, Radu looks over multiple departments across the company, providing visibility over what happens in product, and what are the needs of customers. With more than 8 years in the Technology era, and part of BlueTweak since the beginning, Radu shifted from a developer (addressing end-customer needs) to a more business oriented role, to have an influence and touch base with people who use the actual technology.